The discussion centers on analyzing incoming numbers and related data formats, with examples like the Puerto Rico and Texas area codes. It adopts a structured, methodical approach to decode origins, verify formatting, and assess legitimacy. The process emphasizes privacy-aware validation and auditable reasoning, flagging anomalies across sources such as names and project references. Results guide routing decisions and governance compliance, yet unresolved questions remain about how best to handle ambiguous signals and emerging patterns.

Decode the Numbers: What Area Codes and Formats Reveal

Area codes and data formats encode geographic, temporal, and system design information that researchers can interpret to infer population distribution, regional activity, and calling patterns. Decode the Numbers analyzes address formats and area codes as structured signals, enabling methodical inference about origins, routing, and demographic reach. Patterns reveal consistency, anomalies, and potential routing constraints without revealing sensitive specifics or personal identifiers.

Validating Data: From Formatting to Legitimacy Checks

Validating data moves beyond superficial formatting to establish legitimacy through systematic checks. The process assesses source credibility, consistency across fields, and adherence to schema, filtering out invalid data. It weighs privacy concerns, ensuring minimal exposure and compliance with policies. Rigorous verification reduces input noise, supports reliable outcomes, and preserves autonomy, while avoiding overreach or biased assumptions about data provenance.

Patterns and Red Flags: Spotting Anomalies Across Sources

Across diverse data streams, anomalies emerge as deviations from established norms, signaling potential issues in provenance, timing, or encoding. The analysis centers on area codes, data formats, and ambiguity, identifying patterns that trigger anomaly detection. Methodical scrutiny highlights mismatched timestamps, inconsistent separators, and improbable aggregations, enabling rapid triage. Clear criteria reduce false positives, guiding disciplined, freedom-respecting evaluation across sources.

Practical Workflows: Privacy-conscious Validation for Communications

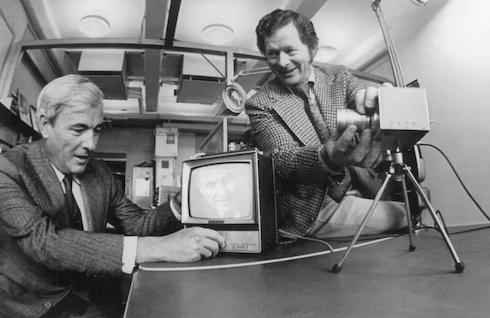

Practical workflows for privacy-conscious validation in communications formalize a disciplined approach to verifying data integrity while protecting user confidentiality. The process emphasizes structured checks, minimal data exposure, and auditable decisions. It identifies invalid data patterns early, enforces least-privilege access, and documents rationale. By addressing privacy concerns, teams maintain compliance without sacrificing efficiency or analytical rigor in communication streams.

Conclusion

In a detached, methodical posture, the study triumphs in its precision—verifying formats, mapping area codes, and flagging oddities with clinical calm. Ironically, amid meticulous checks, the data’s humility remains intact: numbers whisper origins, names hint at intent, yet truth hides behind privacy rails. The workflow delivers auditable steps and minimal disclosure, proving that rigorous governance can coexist with practical scrutiny, even as the human element—misdirection or motive—continues to require vigilant, ongoing observation.